The Power of Data Ingestion Analysis: Transforming Risk Management Investment Analytics

Sean Mentore

2 min read

Every data problem in financial services is an ingestion problem.

I have said this to a lot of people over the past few years and the pushback is always the same. The firm thinks their problem is the calculation engine. Or the reporting layer. Or the vendor. They are usually wrong.

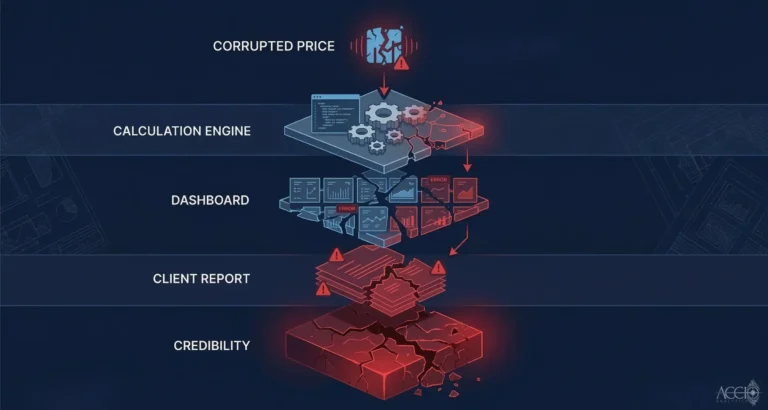

When a client report shows incorrect numbers, you trace it back. Most of the time you end up at the same place. The data that entered the system was wrong, inconsistent, or structured differently than what the system expected. The calculation engine did exactly what it was told. The dashboard displayed exactly what it received. The problem originated upstream, at the point where data from the outside world entered the internal system.

That is the ingestion layer. And for most financial institutions, it is unmanaged.

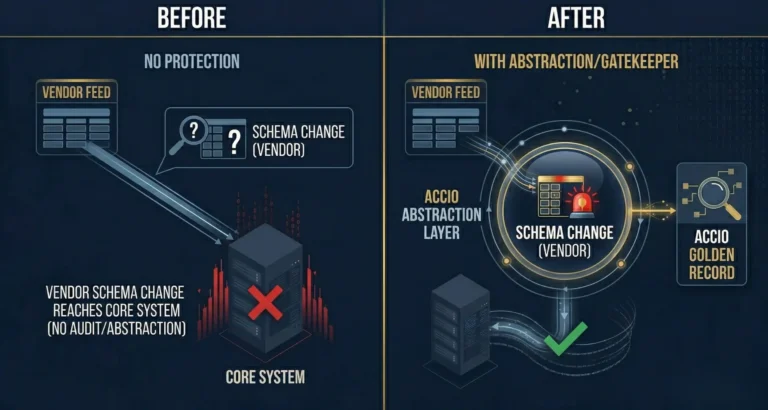

What I mean by unmanaged is this: there is no systematic process for validating incoming data before it touches the core systems. Bloomberg delivers a feed. The ETL loads it. The calculation engine runs. Somewhere in that chain, a field name changed, a value was null where it should have been zero, or two vendors priced the same instrument differently. None of those issues were caught. They propagated downstream.

The Accio Ingestion Engine is built to catch them. It sits at the front door of your data infrastructure. It ingests from multiple vendor feeds, normalizes each one to a consistent schema, validates every incoming record against expected patterns, and routes exceptions before they reach your core. Every transformation is logged. Every decision is auditable. You can trace any output back to the raw input it came from.

This is not a sophisticated technical concept. It is a discipline that most institutions have never applied systematically because the legacy systems were not built to support it. The batch process runs at night. Problems show up in the morning. The trace happens manually, if at all.

Real-time ingestion with systematic validation changes the operational reality. You know within seconds whether incoming data passed or failed. You know exactly which vendor feed introduced an anomaly. You know what was done with it.

For risk management, this matters because risk calculations are only as accurate as the data driving them. For investment analytics, it matters because any analysis built on unvalidated data is an analysis built on assumptions you have not made explicit.

Fix the ingestion layer. Everything above it gets more reliable.

By Sean Mentore, Co-Founder & Chief Architect, Accio Analytics

Technical Whiteboard Session

Sean is offering to sit down with your lead architect and head of operations for a 30-minute technical whiteboard session where we will:

- Map your current ETL flow for data ingestion

- Identify the specific points where your system leaks capital

- Identify where your data lineage fails the Proof of Origin test

Related reading: Why Every Financial Data Problem Is an Ingestion Problem

Additional Insights

View All Insights