Machine Learning for Tail Risk: Case Studies

Accio Analytics Inc.

17 min read

Machine learning is reshaping how financial institutions manage tail risk – the rare, extreme market events that can devastate portfolios. Traditional models like Value-at-Risk (VaR) and Expected Shortfall (ES) often fall short during market stress, relying on assumptions that collapse under extreme conditions. Enter machine learning: a powerful tool for identifying complex patterns, modeling extreme scenarios, and providing real-time insights that traditional methods simply can’t match.

Why This Matters to You

- For Executives: Tail events can destroy years of gains. Machine learning offers a way to anticipate and mitigate these risks, protecting both portfolios and client trust.

- For Risk Teams: Advanced models like Tail-GAN and CoFiE-NN outperform legacy approaches, offering more accurate tail risk estimates and fewer VaR breaches during stress periods.

Key Takeaways

- Machine Learning Beats Legacy Models: Techniques like Tail-GAN and hybrid models deliver sharper insights into extreme risks, outperforming GARCH and EVT methods.

- Real-Time Monitoring is a Game-Changer: Platforms now provide live stress testing and anomaly detection, replacing outdated batch reports.

- Operational Risks of AI: While AI investments can enhance efficiency, they also introduce new risks, such as external fraud and compliance failures, which must be actively managed.

This article dives into case studies and actionable strategies to help your team integrate machine learning into tail risk management. Let’s explore how these tools are transforming the field.

Case Study 1: Tail-GAN for Multi-Asset Scenario Simulation

How Tail-GAN Works

Tail-GAN is a generative adversarial network designed to simulate multi-asset return scenarios, particularly focusing on extreme co-movements and fat-tailed characteristics. It operates using a generator–discriminator framework, enhanced by a risk-aware module. This module utilizes jointly elicitable scoring functions for Value at Risk (VaR) and Expected Shortfall (ES), ensuring consistency in risk metrics through tailored loss functions and critic networks. The result? Accurate reproduction of these metrics at specific confidence levels, such as 97.5% or 99%.

What makes Tail-GAN stand out is its ability to capture regime-dependent nonlinearities directly from data. The generator can adapt to market conditions like volatility regimes, yield curve dynamics, or credit spreads, allowing it to simulate extreme scenarios that align with various macroeconomic environments. These scenarios, while not present in historical data, remain statistically aligned with observed stress periods, offering a fresh perspective on potential risks.

Results from Market Data Testing

When tested on U.S. market data, Tail-GAN demonstrated clear advantages over traditional models in handling extreme VaR/ES quantiles. Backtesting revealed better performance compared to models like GARCH, EGARCH, and simple historical simulation. One key improvement was the reduction of clustered VaR breaches during stress periods, a common shortfall of parametric models.

For multi-asset portfolios, Tail-GAN excelled in capturing time-varying correlations and tail dependencies, areas where Gaussian models and static copulas often fall short. It generated realistic combinations of shocks that mirrored crisis dynamics, producing portfolio-level tail distributions with heavier and more realistic loss tails. This led to higher ES estimates, highlighting risks that legacy methods tend to underestimate.

These findings underscore the potential for Tail-GAN to redefine risk modeling, offering a more accurate view of extreme scenarios and their impact on portfolios.

What Financial Institutions Can Learn

To integrate Tail-GAN into risk management processes, institutions should first establish a unified time series data repository and GPU-enabled infrastructure for efficient model training. Tail-GAN outputs can then be connected to existing risk systems via APIs or microservices, enabling seamless access to scenario sets without disrupting current workflows.

For instance, platforms like Accio Quantum Core make it possible to generate tail scenarios on demand – whether at the desk, portfolio, or enterprise level – triggered by intraday market changes or position updates. To ensure effective use, institutions should implement transparent documentation, independent out-of-sample backtesting, and ongoing performance monitoring. These steps not only support governance but also transform static tail risk reports into dynamic, real-time tools for monitoring and decision-making.

Case Study 2: Machine Learning for Extreme Event Modeling

Machine Learning Methods for Tail Risk Modeling: Comparison of Approaches

Comparing Machine Learning Techniques

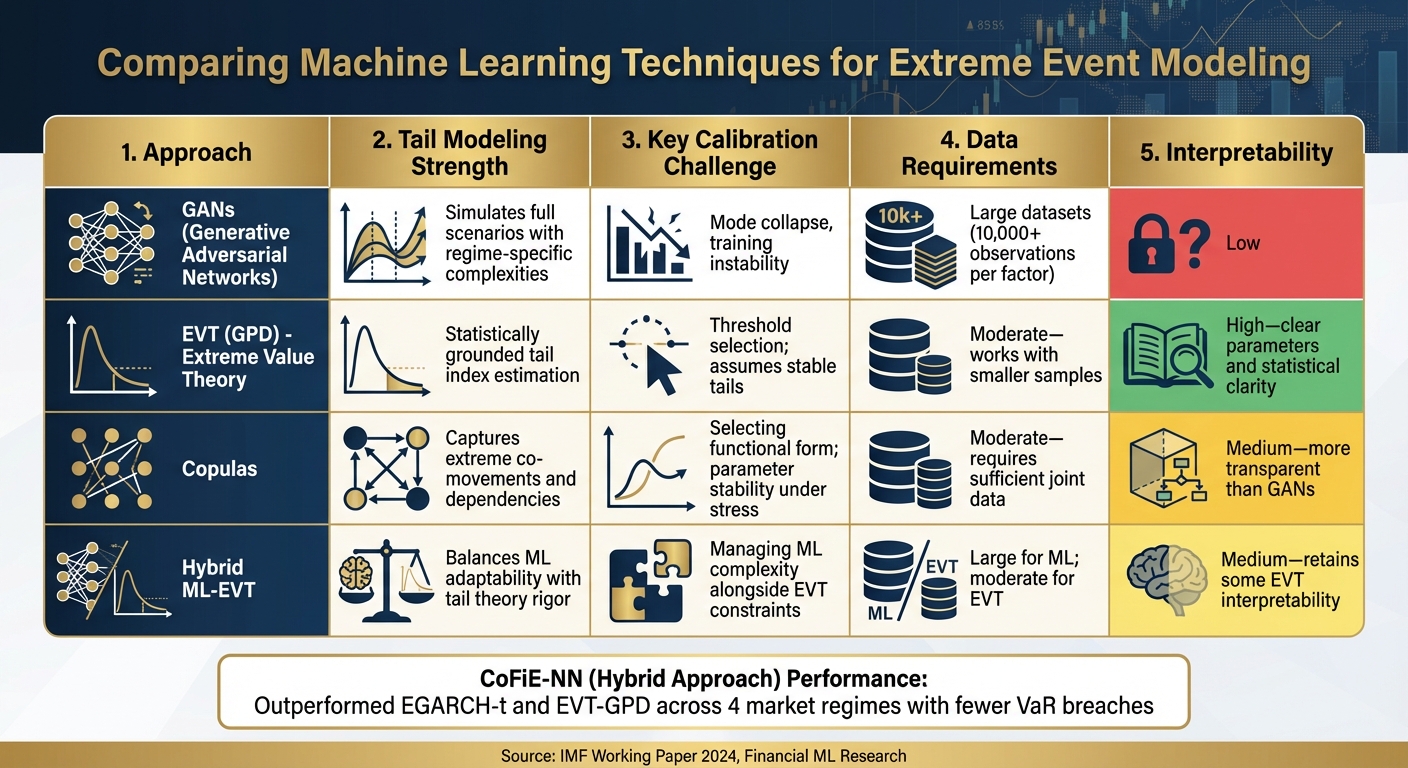

Machine learning offers a range of tools for modeling extreme events, making it possible to perform precise stress testing. Understanding the strengths and limitations of these methods helps risk teams select the most suitable approach for their needs.

Generative Adversarial Networks (GANs) are particularly effective at simulating full scenarios, capturing complex interactions across different assets. These models can replicate crisis dynamics and tail dependencies that align with historical stress events. However, GANs require extensive datasets – typically tens of thousands of observations spanning various market conditions – and often face challenges like training instability and mode collapse, which complicate calibration efforts.[6][7]

Extreme Value Theory (EVT), especially when using the Generalized Pareto Distribution (GPD), offers a statistically grounded method for modeling extreme losses above high thresholds. EVT provides interpretable outputs, such as tail indices, and works well with smaller datasets. The main difficulty lies in selecting the threshold: setting it too low introduces bias, while setting it too high reduces the number of usable data points. Another limitation is EVT’s reliance on stable tail behavior, which may not hold during rapid shifts in market conditions.[6][8]

Copula models are valuable for understanding how extreme movements in different risk factors occur together. Tail-sensitive copulas, such as t-copulas, can capture the increased correlations seen during crises, which standard Gaussian copulas often miss. However, selecting the right copula family and achieving stable parameter estimates – especially under stressed conditions – can be difficult.[6][8]

A 2024 IMF working paper introduced CoFiE-NN, a hybrid approach that combines Cornish-Fisher expansions with LSTM neural networks to predict tail risks. Tested across four market regimes – pre-global financial crisis, the Global Financial Crisis, the European debt crisis, and COVID-19 – CoFiE-NN outperformed both EGARCH-t and EVT-GPD models on most statistical metrics. It also delivered fewer and less clustered Value-at-Risk (VaR) breaches compared to EVT-GPD, demonstrating better control over extreme tail risks. Notably, the model consistently surpassed EVT across all regimes except one, showing its adaptability to changing market conditions.[2]

Research highlights the benefits of combining multiple machine learning techniques with regularization to improve tail risk estimates. Studies have shown that ensemble approaches yield statistically significant improvements in 1%–5% VaR backtest scores compared to single models. This is particularly important because extreme events are rare, and deep learning models without tail constraints can sometimes produce unreliable quantiles.[4][8]

| Approach | Tail Modeling Strength | Key Calibration Challenge | Data Requirements | Interpretability |

|---|---|---|---|---|

| GANs | Simulates full scenarios with regime-specific complexities | Mode collapse, training instability | Large datasets (10,000+ observations per factor) | Low |

| EVT (GPD) | Statistically grounded tail index estimation | Threshold selection; assumes stable tails | Moderate – works with smaller samples | High – clear parameters and statistical clarity |

| Copulas | Captures extreme co-movements and dependencies | Selecting functional form; parameter stability under stress | Moderate – requires sufficient joint data | Medium – more transparent than GANs |

| Hybrid ML-EVT | Balances ML adaptability with tail theory rigor | Managing ML complexity alongside EVT constraints | Large for ML; moderate for EVT | Medium – retains some EVT interpretability |

These findings provide actionable insights for improving stress testing frameworks. Choosing the right machine learning model is essential for monitoring dynamic risks and predicting extreme events effectively.

Using Machine Learning in Stress Testing

Machine learning is transforming stress testing by enabling real-time, data-driven scenario generation. These models go beyond static assumptions, capturing joint movements, contagion effects, and flight-to-quality dynamics observed during historical crises.[6][7]

For instance, GANs trained on past crisis data can simulate multi-asset paths that replicate historical stress patterns while also generating plausible, unseen extreme scenarios. This approach replaces static shocks – like a fixed "200 basis points rate hike" – with dynamic severity scaling that adapts to current conditions, such as volatility, liquidity, or macroeconomic shifts.[7] The result is more reliable tail loss estimates, which are critical for setting economic capital, risk limits, and contingency funding strategies.

Machine learning also enhances stressed VaR and expected shortfall calculations. The CoFiE-NN model demonstrated its ability to pass regulatory backtests, including Kupiec unconditional coverage, Christoffersen independence, and Lopez quadratic loss tests, more consistently than traditional models. For U.S. banks operating under Basel and CCAR guidelines, this translates to fewer backtesting exceptions and stronger alignment with actual crisis outcomes.[2][7]

Copula models, when combined with machine learning, allow for time-varying tail dependence, capturing how correlations spike during market stress. Unlike static correlation matrices, machine learning techniques like regression and recurrent networks enable copula parameters to adjust dynamically to shifts in volatility, liquidity, or broader economic conditions. This leads to more accurate joint loss distributions for portfolio stress tests, helping identify which business lines or asset classes are most vulnerable under extreme scenarios.[7][8]

Platforms like Accio Quantum Core make it feasible to integrate these advanced models into daily risk workflows. By shifting from batch processing to real-time capabilities, risk teams can generate tail scenarios on demand – triggered by intraday market changes or portfolio updates – and view the results in live dashboards. This evolution turns stress testing into a continuous monitoring tool, empowering teams to make proactive decisions during periods of rapid market deterioration.

Case Study 3: AI Investments and Operational Risk in Banking

Analyzing Tail Loss Drivers Through Regression

This case study examines how AI investments are reshaping operational risk landscapes in banking, particularly when it comes to tail losses.

A 2025 study conducted by the Federal Reserve Bank of Boston revealed a concerning trend: U.S. bank holding companies with higher AI investments experienced more frequent and severe tail operational losses. Researchers, led by McLemore, analyzed quarterly data from major U.S. banks, focusing on loss events that exceeded the 90th and 95th percentiles. Their regression models showed a clear link between increased AI spending and heightened exposure to extreme loss events. [3]

Among these losses, external fraud emerged as the top contributor, accounting for 42% of tail losses at banks with significant AI investments. Losses at the 99th percentile averaged $750 million per event. Compliance failures also surged by 35% in this group, often tied to automation errors in AI-driven transaction monitoring systems, as highlighted in OCC risk reports from 2023–2024. [1] A striking example from 2023 involved a major U.S. bank with over $1 trillion in assets. The bank reported $1.2 billion in external fraud losses – a tail event at the 99.5th quantile – due to model biases that failed to detect unusual patterns in high-frequency trading oversight. This incident was detailed in the bank’s 10-K filing and FDIC loss database. [1]

Quantile regression models, focusing on the 95th and 99th percentiles, identified AI investment as a significant driver of tail losses, with a coefficient of 0.32 (p<0.01). Random forest models further confirmed non-linear effects: banks spending over $100 million on AI faced an 18% increase in loss variance. [1][4] Analysis of Call Report data from 2020 to 2024 revealed that every additional $10 million in AI investment increased the probability of tail losses by 22%, compared to an 8% increase for general IT spending. At the 99th quantile, the elasticity of AI investment stood at 1.45. [4]

IMF working papers shed light on why this happens. Experts argue that AI models’ lack of transparency and gaps in training data make them vulnerable to adversarial attacks, which exploit the opaque, non-linear nature of these systems. Their recommendation? A hybrid approach combining human oversight with AI could reduce quantile excesses by up to 30%. [2]

These findings underscore the need for immediate action to balance innovation with robust risk management. Dynamic monitoring, supported by advanced platforms, is essential to mitigate these operational risks effectively.

What Banking Executives Should Consider

For banking leaders, the challenge lies in harnessing AI’s potential without increasing operational vulnerabilities. The data points to actionable strategies for reducing tail loss exposure while maintaining AI-driven growth.

Stress test AI models for extreme scenarios. Banks should conduct quantile-specific stress testing on a quarterly basis. For example, simulate 99th percentile fraud scenarios to identify potential AI system failures under extreme conditions. Allocating at least 20% of AI budgets to tools like SHAP, which enhance model interpretability, can help address the transparency issues that allow anomalies to go unnoticed. [1][2]

Set real-time monitoring thresholds. Establish live dashboards to flag when AI spending exceeds 12% of IT budgets or when fraud quantiles surpass the 95th percentile. Using ensemble models like random forests can enable early warnings, allowing risk teams to make dynamic adjustments without overhauling entire systems. Platforms such as Accio Quantum Core make this approach feasible by shifting from batch processing to real-time monitoring, helping teams respond to emerging risks as they occur. [1][4]

Strengthen controls for fraud and compliance. Focus on areas most affected by AI investments. This could include maintaining human oversight for high-value transactions flagged by AI systems or adding redundant verification layers for compliance decisions made by automated tools. These measures aim to preserve AI’s efficiency gains while mitigating the amplified risks documented in the Federal Reserve’s research. [3]

sbb-itb-a3bba55

Case Study 4: Random Forests and Neural Networks for Hedging

Predicting Market Downturns with Machine Learning

This section delves into how Random Forests and Neural Networks are reshaping hedging strategies to address one of the most pressing challenges for portfolio managers: predicting significant market downturns. These machine learning models analyze complex datasets – like volatility surfaces, options pricing, and market microstructure signals – to forecast when monthly losses of 5%–10% or more might occur. With these insights, portfolios can adjust their hedging strategies in advance.

Random Forests are particularly effective at identifying nonlinear patterns in market signals such as the VIX, put-call skew, term structure, and credit spreads. By aggregating hundreds of decision trees trained on diverse historical data, these models provide a clear and reliable view of changing market conditions. Meanwhile, LSTM Neural Networks excel at processing high-dimensional, noisy time-series data, such as intraday volatility and order book imbalances. Together, these models complement each other: Random Forests classify discrete "risk-on/risk-off" scenarios, while neural networks generate continuous risk scores to guide dynamic hedge ratios. This dual framework translates predictive outputs into actionable hedging strategies.

Firms began exploring these techniques after the 2008 financial crisis, and by the 2020s, platforms like BlackRock’s Aladdin incorporated them for tail risk stress testing during volatile periods [1].

The process works as follows: models produce daily tail-risk scores, which are mapped to specific hedging actions. In high-risk scenarios, portfolios shift toward protective instruments like puts or variance swaps, guided by forecasted Conditional Value-at-Risk (CVaR) at the 95th or 99th percentile. During calmer periods, hedge sizes are scaled back to manage costs. This approach ensures that hedges are proportionate to forecasted CVaR, covering potential losses at extreme percentiles over a defined time horizon.

Effects on Portfolio Performance

Backtesting shows that ML-driven hedging with Random Forests and Neural Networks can reduce tail risk by 30%–40%, lower realized volatility during market stress, and improve drawdown profiles – all while maintaining competitive returns. Tail risk is commonly measured using CVaR at the 95% or 99% level over one- to three-month horizons. For instance, a baseline 99% CVaR of –18% improved to approximately –11% to –12% when hedges were activated during high-risk periods identified by the models. This represents a 35%–40% reduction in expected losses beyond traditional Value-at-Risk (VaR) thresholds [7][9].

While gross returns may underperform during extended bull markets due to the cost of carrying options, the strategy’s ability to adjust for extreme losses makes it particularly attractive to institutional investors and regulators in the U.S. The frequency and severity of drawdowns – such as monthly losses exceeding 10% – are significantly reduced, while average and median returns remain competitive.

Successful execution of this strategy hinges on several factors. Clean and timely data pipelines for options and volatility surfaces are critical. Tools like SHAP (SHapley Additive exPlanations) ensure model explainability, which is vital for governance and regulatory compliance. Regular recalibration of models is also necessary to account for shifts in market regimes.

Many firms in the U.S. are adopting modular, real-time systems to support these ML-driven hedging models. For example, Accio Quantum Core integrates seamlessly with order management and risk systems, enabling continuous updates to tail-risk scores and hedge adjustments. This microservice-based architecture allows for instantaneous feedback loops, ensuring that as hedges are executed and market conditions evolve, updated data flows back into the models. This ensures accurate forecasts, refined hedge ratios, and real-time tail-risk metrics for decision-makers at the executive level [7].

Key Takeaways and Implementation Approaches

Comparing Machine Learning Methods

Machine learning is proving to be a game-changer compared to traditional models, as case studies clearly show. Take LightGBM, for example – a gradient boosting approach that achieved an 85% reduction in quantile loss for tail risk prediction compared to GARCH models. It also delivered a 67% higher log score for long-term forecasting[9]. Similarly, FactorVAE cut reconstruction error by 44% while identifying interpretable latent factors in S&P 500 data. This capability enabled earlier detection of regime shifts, such as the market recovery during COVID-19[5]. These methods excel at capturing fat-tailed distributions, which traditional models often fail to address.

Here’s a snapshot of how these methods stack up:

| Method | Tail Accuracy | Scalability | Computational Efficiency |

|---|---|---|---|

| LightGBM | 85% lower quantile loss vs. GARCH | High (excels on long horizons) | High |

| FactorVAE | 44% lower reconstruction error | Excellent (10 latent factors from S&P 500) | Moderate |

| Tail-GAN | High | Excellent for multi-asset simulation | Moderate |

| GARCH/Standard GAN | Baseline (poor with fat-tails) | Limited (parametric assumptions) | High |

The table highlights that LightGBM leads in tail forecasting accuracy, while FactorVAE provides interpretable insights for regime detection – a critical feature for communicating risk to executives and regulators. These comparisons emphasize the strategic value of adopting advanced machine learning methods for real-time risk analytics.

Real-Time Integration with Modular Systems

To capitalize on these machine learning advancements, real-time integration is crucial. Static overnight batch processing simply can’t keep up with today’s demands. Enter modular systems like Accio Quantum Core, which allow financial institutions to seamlessly incorporate these models into their existing setups. These API-driven systems transform static risk reports into live, actionable tail risk metrics.

Accio Quantum Core processes data in parallel, delivering insights in seconds. This speed empowers executives to set strategic parameters and receive immediate feedback, enabling them to adjust thresholds dynamically as market volatility changes. This flexibility is especially valuable during periods of market stress, where frequent rebalancing becomes essential. By moving from static models to dynamic, real-time systems, organizations can better respond to shifting conditions – a theme explored throughout earlier discussions.

What’s Next for Machine Learning in Tail Risk

The future of machine learning in tail risk management looks promising. Techniques like Tail-GAN are pushing the boundaries of scenario simulation, while Bayesian EGARCH hybrids are emerging as robust tools for tail forecasting in volatile markets. Institutions are also turning to ensemble methods, which combine multiple machine learning models to improve stress testing. These methods excel at modeling extreme events through approaches like copulas and deep generative models.

The industry is shifting toward real-time predictive intelligence as the new standard. Platforms now support continuous tail risk monitoring, intraday stress testing, and dynamic hedging – replacing outdated overnight reports. To stay ahead, executives should focus on out-of-sample validation using fat-tailed data, adopt modular systems for real-time applications, and monitor latent factors closely for early warnings of regime shifts. These proactive strategies enable quicker responses to market changes, better hedging decisions, and a stronger overall risk posture.

Conclusion

The case studies explored here highlight how machine learning is redefining tail risk management in financial services. For instance, LightGBM achieved an impressive 85% reduction in quantile loss [9][4], while FactorVAE demonstrated its ability to detect regime shifts early during the COVID-19 crisis [5]. These advanced models excel at identifying fat tails, nonlinear dependencies, and structural breaks – challenges that traditional models like GARCH and VaR often fail to address. The result? Better capital allocation, faster alerts, and stronger resilience.

For U.S. asset management leaders, the message is clear: it’s time to move beyond static risk reports and embrace real-time monitoring. Firms that adopt modular machine learning solutions into their existing systems stand to gain a competitive edge. Tools like Accio Quantum Core are revolutionizing the field by turning batch processing into live, actionable insights. With features like real-time tracking of tail metrics, instant threshold adjustments, and alerts delivered in seconds, these platforms empower institutions to make swift, informed decisions.

Of course, integrating these solutions isn’t without its hurdles. Data engineering, governance, and real-time processing remain significant challenges. However, combining advanced techniques – such as gradient boosting for forecasts, GANs for generating scenarios, and factor models for regime detection – into a unified, API-driven framework can provide firms with the agility they need to tackle tail events before they spiral into significant losses. These integrated approaches mark the shift from reactive risk management to proactive tail risk intelligence.

Key advancements like intraday stress testing, multimodal data integration, and AI-assisted decision-making are quickly becoming indispensable for modern risk management. Financial institutions that take the lead by piloting machine learning models for tail risk, validating them with U.S. stress data, and embedding them into live workflows will be better equipped to handle future market crises with speed and confidence.

The shift is already happening. The question for asset management leaders is not if they should adopt machine learning for tail risk, but how quickly they can make it a core part of their strategy.

FAQs

How does Tail-GAN enhance tail risk management compared to traditional models like GARCH and EVT?

Tail-GAN transforms how tail risk is managed by using machine learning to provide real-time insights into extreme market events. Traditional models like GARCH and EVT rely heavily on historical data and fixed assumptions, which can make them less responsive to rapid market changes. Tail-GAN, however, adapts dynamically to shifting conditions, offering a more responsive and accurate way to assess risks.

By identifying potential tail risks with speed and precision, this approach equips decision-makers to tackle market volatility head-on, moving beyond the limitations of retrospective analysis.

What are the risks of using AI in financial institutions?

AI brings a unique set of operational risks to financial institutions. These risks include reliance on flawed or biased data, which can skew insights and lead to poor decision-making. There’s also the danger of model errors, where inaccuracies in AI algorithms might result in costly mistakes. On top of that, cybersecurity vulnerabilities pose a serious threat, as sensitive data and systems could become targets for malicious attacks. Another challenge is the potential overreliance on automated systems, which may weaken essential human oversight and create blind spots in critical decisions.

Addressing these risks requires a proactive approach. Financial institutions should prioritize rigorous data validation to ensure accuracy, conduct regular audits of AI models to check for fairness and reliability, and maintain a healthy balance between automation and human expertise. This combination helps safeguard both operational integrity and informed decision-making.

How does machine learning improve real-time stress testing and tail risk management?

Machine learning is transforming the way real-time stress testing and tail risk management are handled, offering immediate, practical insights into shifting market dynamics. This capability enables asset managers to swiftly refine strategies, recalibrate risk limits, and tackle emerging vulnerabilities before they develop into larger issues.

With its ability to process massive datasets in real time, machine learning uncovers patterns and irregularities that conventional systems often overlook. This gives firms the edge to act decisively and confidently, staying ahead of market fluctuations while minimizing unforeseen risks.

Related Blog Posts

Additional Insights

View All Insights